-

There are other places where having a generally-useful floodcontrol/firewall could be useful, and we should hope to minimise overlap between these projects.

Can floodcontrol use firewall's more general API, for example?

My thoughts on firewall 0.1

(This also documents my understanding from reading the code, please correct me if I've got it wrong!)

Currently it provides:

- a csrf token generator and checker - the token is stored on session, and the token sent back must match that on the session.

- a fraud event

- a function to abruptly terminate a request with a 403 if bot is detected.

- two (almost identical) symfony events system that you must trigger in code by knowing the class name, and separate listeners for each event.

I think what we need is:

-

A way to record a range of events. These could be categorised, sure, but different orgs will require different events.

-

A way to record things that should not happen too often, so we can help detect a bot. This is not just fraud events or invalid CSRF - if one IP is submitting zillions of valid requests, it's probably a bot.

-

CSRF tokens that are more tightly limited. Different situations may require narrower or broader limits.

- Could include a time window (i.e. not just an expiry but a not-until timestamp too). This could be used to prevent forms being submitted too quickly, e.g. like floodcontrol does.

- Could be tied to the IP address, since we're leaning heavily on IP address for blocking anyway, so we're accepting the problems (e.g. mobile users' IP may change as they move around, IIRC). This would stop session sharing across a botnet.

- Could enforce single use CSRF tokens. Tricky and expensive (db writes. Could use redis).

- Could be tied to a purpose, so instead of one cross-site CSRF we have one for the stripe form, one for the contact form etc.

-

A way for people to configure an algorithm for detecting baddies. e.g. 5 successful payments in a short period may be ok (multiple bookings by people in a café chatting about how they must go to the next CiviCRM event!), but 5 successful payments alongside an invalid CSRF and 4 declined payments - probably not OK.

-

Reports, tests and documentation: how's it supposed to work, and is it? The reporterror extension may be useful.

-

Forensic logs: when a bot is attacking it's easy to jump to conclusions about its behaviour that are wrong, but knowing the exact pattern of behaviour is important to being able to react to it. e.g. is it a single IP using

curlorpythonto fire loads of requests? Are they doing a GET or a POST, or a pattern of both? What exact data are they sending (query + POST + url)? -

Configurable log, token, timeout cleanup.

Edited by Rich -

I feel like @totten would have some useful comments re architecture...

-

Note: This wasn't for a credit card form (just a subscribe webform) but I've spotted some behaviour recently that looks like it was using tor nodes to submit - which means that we might need to think about more than just IP address detection. They were using random char strings for names. I ended up using frequency analysis of existing firstname/lastname alongside ratio of upper to lowercase letters to identify those that got in.

-

(I wonder how much you're allowed in one comment... let's see...)

Idea for general purpose bot-stop/firewall/floodcontrol extension.

Every process that needs protection (e.g. a contribution form or API) will create at least one record when a request is received.

Each record has a type and an assessment of severity as well as IP address and possibly other contextual data that might help analyse or restrict bots - e.g. the query string and POSTed data, if appropriate.

Configuration (sensible defaults should apply) for each type includes:

- The severity (integer): a very dodgy event (e.g. payment processor returns a fraud error) would have a big severity; an invalid CSRF would be medium; a successful request would probably have a severity of 1 (but not usually zero - how many people are going to load your contribution form 100 times?)

- Lifetime: how long do we count this for, e.g. 1 hour, 1 day or 6 months etc. Again, different types would have different lifetimes: a successful payment might have a lifetime of an hour, a fraud error a week.

- Zombie time: how long should we count this for at half-severity?

Processes and extensions are free to define their own types and sensible defaults.

When a request is being processed we sum live records' severities to calculate the risk and check the following to see if we need to take action

- Does this exceed the site's configured site-wide limit (could be no limit)?

- Does the risk for this IP exceed the site's configured per-IP address limit?

If one of these is triggered then the IP address is recorded on the block list, with a configured time, e.g. 1 day, or 2 weeks. Also, we add a "rejection" record - this type could have a high severity and a long life to give the IP a while on a short leash after the ban is lifted. These records can also be used to determine repeat offenders - "oh, that IP again...". A cron job handles lifting bans, but bans will only be lifted when the risk is now below the thresholds.

Also, if configured to, a cron task sends the blocked IP addresses to a central IP blocking service, and fetches the list from it. That way your CiviCRM site can be protected by mine. My ideas for this service are that clients would need an API key, and there would be a level of monitoring, so if some clients were sending loads of IPs we could get in touch and say "are you sure that's legit?". We'd remember which client sent which IP so it could ignore any clients that go rogue.

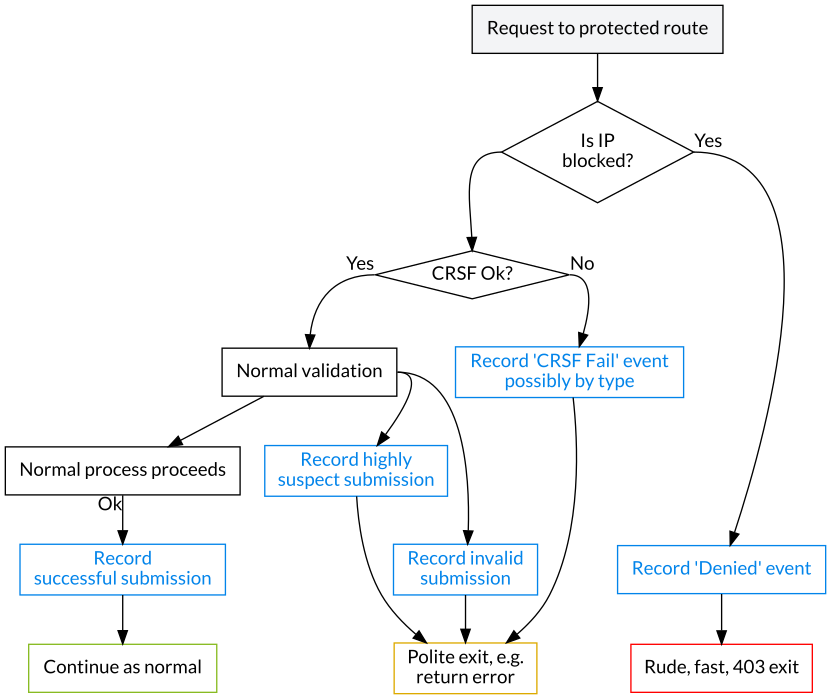

Flowchart

Recording events

When an event is recorded, the severity calculations are run to determine whether an IP address block should be put in place. If so, the process will end abruptly there with a 403.

Example config

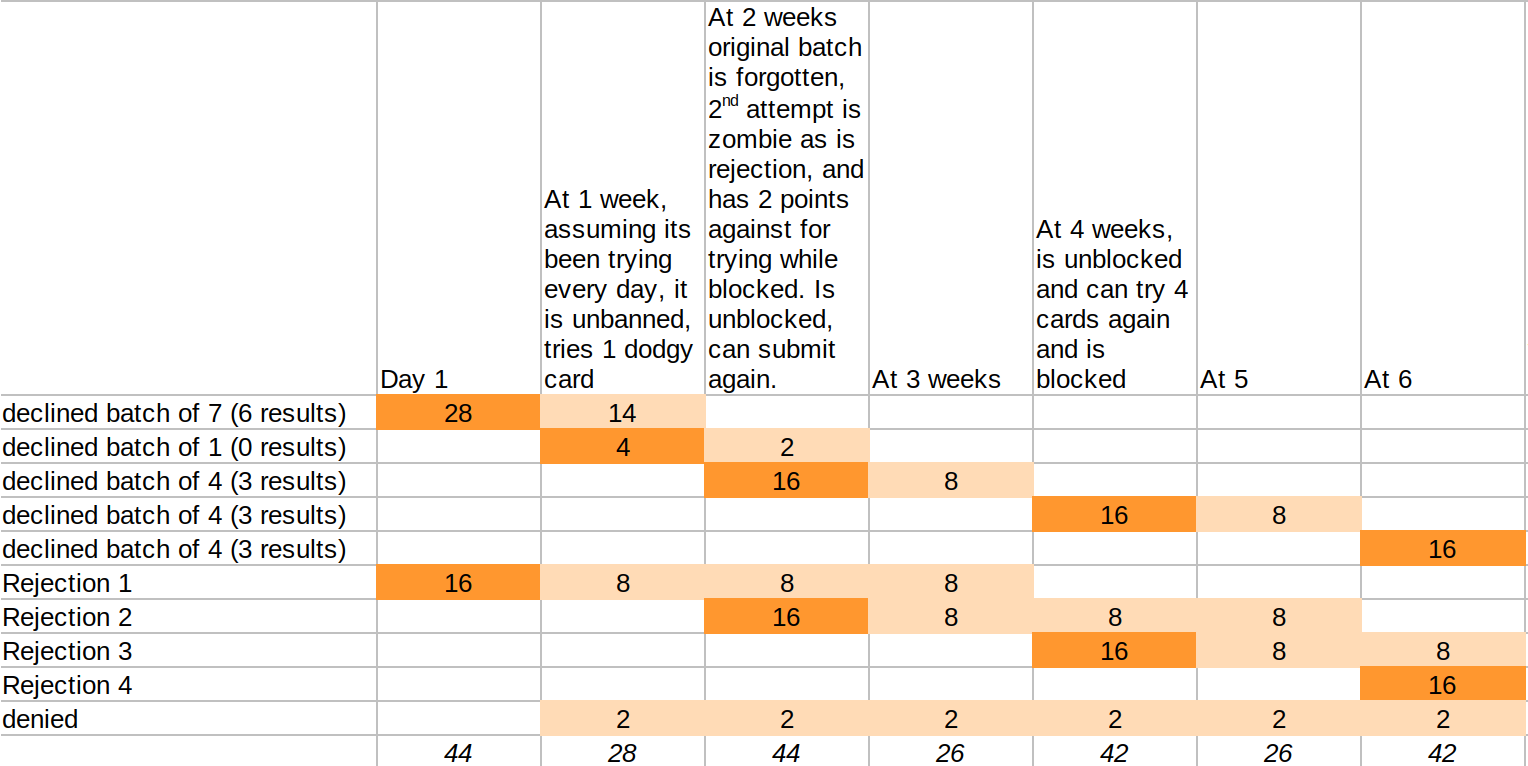

type severity lifespan zombie lifespan Invalid CSRF token 4 1 day Invalid data: severity 2 1 hour Custom: Dodgy looking (e.g. card co says fraud - custom) 16 1 week Declined card 4 1 week 2 weeks Success: severity 1 1 day Rejection: severity 16 2 days 1 month Denied (attempted to access while banned) 2 1 day - IP Ban time: 1 day

- A list of safety IP addresses are added - these will never be blocked, e.g. your office IP.

The perfect user completes a form valid first time and scores 1.

A not so perfect but still human user who submits twice with validation errors, again with a declined card, and finally again for success, would score 2 + 2 + 4 + 1 = 9.

Let's say this is a donation form. At most we expect 5 donations per IP address in a week. We expect half of them to be perfect (score 1) and half really bad (score 9), so we set the per IP risk at (1+9)/2*5 = 25.

Site-wide, how many donations are we expecting? Let's say we're hopeful for 10, but dreaming of 100, but honestly there's no way we're going to get 200. Using the same risk estimate as the last para, set the site risk limit at 5*200 = 1000. The site-wide limit is a last resort - once reached it will block everyone who visits! But then if the site is being abused so flagrantly, this might still be sensible (alongside some monitoring!). Site-wide risk can be left blank if you're scared by this...

With this in place:

A Selenium or similar bot (or human) that makes 7 declined card submissions in a row generates a risk value of 4×7 = 28, which exceeds the per IP threshold and so it gets its IP address banned (the bot will not learn the outcome of the 7th card). Also, the rejection is recorded, adding its own severity, so the IP's risk is now 28+16 = 44

If it keeps trying while blocked, it will clock up more risk (scoring +2 for each denied attempt), though these are only configured to count for a day.

When cron runs to check the block list a day later, it finds that the IP is due for release (we've set the ban time to 1 day), however it notices that the IP is still over threshold - it still has a score of 44 because all the events that led up to this have not yet expired - so the block is left in place.

Here's how it plays out:

It ends up with the bot getting away with 3 results every 2 weeks, per IP address at its disposal

It ends up with the bot getting away with 3 results every 2 weeks, per IP address at its disposalThis is quite a reasonable mitigation (I'm contrasting 1,200 cards tried in a afew hours).

However, a keen anti-fraudster could put in place their own rules (we could have a UI for this one day), e.g. I've noticed that a lot of the emails being used by the bot attacking one of my sites have something in common. So I could easily record an event when that pattern is found and block the IP for a month or so.

CSRF API Pseudocode

(it kinda looks like ES6 Javascript, but it's not and most if not all of this would be done in php, I just found this a compact way to write pseudocode)

get_csrf({ validFrom: time() + 15, // 15 seconds from now validTo: time() + 300, // 5 mins from now secret: 'blah', // SHOULD be provided public: 'donation', // MAY be provided }); // Implementation: if (!secret && !public) public = entropyString(); token = (public ? public + '.') + validFrom + '.' + validTo; token += '.' + hash(token + secret + SECRET_SALT) return token // donation.123123223.131231231.26f1f82a4ccd07a855a35297 check_crsf(token, secret, public); // Implementation: if empty return 'missing' parse(token) || return 'syntax' if time() < validFrom return 'early' if time() > validTo return 'late' if public && public !== parsedPublic return 'irrelevant' if hash(stuffFromToken + secret) === hashFromToken return 'ok' return 'invalid'Forms that rely on _SESSION

Wherever possible, we make the token valid for our particular use - e.g. a particular contribution page.

// Send CSRF crsf = get_csrf({validFrom, validTo, secret: getSessionKey() + 'contributionPage' + pageID + getIpAddress() }); // Check CSRF result = check_csrf(inputToken, getSessionKey() + 'contributionPage' + pageID);Forms that need to be stateless

This could be used for custom stuff, or could be used as an external API, e.g. if the website and CiviCRM are separate

// get CSRF crsf = get_csrf({validFrom, validTo, secret: getIpAddress() + 'donation' }); // Check CSRF result = check_csrf(inputToken, getIpAddress() + 'donation')Other notes

- tying the IP address to the token has advantages in that it can't be passed around. However it may not always be appropriate and is not needed; it's a MAY not a MUST.

- tying the token to a purpose is a good thing.

- you can use "public" to include data in the public token, like the dates are. This could be useful to help identify when a token is being used in the wrong context because this is either (a) a coding error! or (b) definitely suspect/a forgery. Further, you could easily test if the token would be valid if using the user input purpose from the token or not, which again could help with an assessment of risk. This part is not super important to me.

- getting a token without specifying secret or public data will result in one based on random public data. But much better to use a specific purpose string - either in private or public.

CSRF Implementation

If you know your form is not going to be cached you could generate a CSRF token while producing it, with PHP. Alternatively, you could send the form without CSRF token and have a background javascript fetch a token and insert it. You could have that on a window.setInterval do this so that a lazy donor who opens your donation page, goes to lunch then comes back doesn't end up with a stale token.

Other APIs

Again, pesudocode. I'm using Moat as a working name.

Moat::assertIpIsNotBanned(); // exits rudely with 403 if it is.Record an event record. The event names should be declared somewhere (e.g. by hooks / container config etc. in extensions. I imagine we'd ship some suggestions)

Moat::recordEvent("successful_donation"); // or Moat::recordEvent("card_fraud", {inputData}); // record with metadata for analysis/study // These could be API calls instead of direct function calls.I realise this is probably a bit daunting. But I'm fed up of writing the same code and I'm fed up with bots!

Edited by Rich -

@artfulrobot pretty much agree with everything :-) So I think we need a bit of a working group / chat about this. Can this be moved to an issue or something so it's easier to find?

One issue that I had when writing firewall is that you have to know what events / classes to trigger. We will probably require a small "shim" in core eventually that allows us to trigger various events without knowing if we actually have any protection (moat/firewall/floodcontrol) installed. I think that's fairly easy to achieve with symfony as eg. firewall can just listen for events it's interested in (as it already does) and if it's not installed they are ignored.

-

Thanks for reading and commenting @mattwire! I like the idea of generic events that nobody has to listen to - like hooks.

I really like the idea that people can use a firewall type extension (core service one day?) to get and check CSRF tokens, check ban lists and report happenings. We just need a flexible enough interface/contract for these services.

It would be good to have a chat - maybe we'll get some time in Birmingham?

Please register or sign in to comment